- Sabrina Ramonov 🍄

- Posts

- Test Driving ChatGPT-4o (Part 2)

Test Driving ChatGPT-4o (Part 2)

ChatGPT-4o vs Math

In this series, I test drive OpenAI’s multimodal ChatGPT-4o.

For part 1, click here.

Inspired by ChatGPT vs Math (2023), let’s see how ChatGPT-4o performs.

I want to know:

can GPT-4o solve this problem by analyzing just the prompt?

can GPT-4o solve this problem by combining prompt and image?

can GPT-4o solve this problem with the help of prompt engineering?

Math Problem

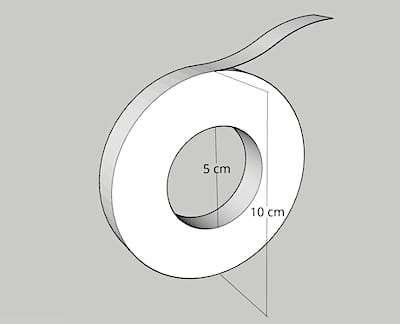

Here’s the image of the math problem:

Problem Statement

There is a roll of tape. The tape is 100 meters long when unrolled. When rolled up, the outer diameter is 10 cm, and the inner diameter is 5 cm. How thick is the tape?

Solution

Reduce the problem to 2 dimensions.

Here’s an ASCII Unrolled Tape:

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬Unrolled Tape Area = T * L

L = length

T = thickness

Here’s an ASCII Rolled Tape:

,,ggddY""""Ybbgg,,

,agd""' `""bg,

,gdP" "Ybg,

,dP" "Yb,

,dP" _,,ddP"""Ybb,,_ "Yb,

,8" ,dP"' `"Yb, "8,

,8' ,d" "b, `8,

,8' d" "b `8,

d' d' `b `b

8 8 8 8

8 8 8 8

8 8 8 8

8 Y, ,P 8

Y, Ya aP ,P

`8, "Ya aP" ,8'

`8, "Yb,_ _,dP" ,8'

`8a `""YbbgggddP""' a8'

`Yba adP'

"Yba adY"

`"Yba, ,adP"'

`"Y8ba, ,ad8P"'

``""YYbaaadPP""''Rolled Tape Area = \pi (R^2 - r^2)

R = outer radius

r = inner radius

The areas are the same!

So we can easily solve for thickness T = 0.00589 cm

Overview of Experiments

Here are my varied experiments:

Prompt only, no image

Zero-shot Chain-of-Thought

Dimensions inside the image, missing data

Prompt and image

Zero-shot Chain-of-Thought and image

I run each experiment 3 times due to the probabilistic nature of LLMs.

Despite the same input, there is no guarantee I’ll get the same outputs.

I designed the experiments to evaluate the impact of:

one modality (text only)

multi modality (text + image)

prompt engineering (Chain of Thought)

Which approach leads to superior outcomes?

Take a guess now and see if you’re right 🙂

1. Prompt Only, No Image

First, I test one modality with no prompt engineering:

I give GPT-4o the text prompt, without the image.

There is a roll of tape. The tape is 100 meters long when unrolled. When rolled up, the outer diameter is 10 cm, and the inner diameter is 5 cm. How thick is the tape?1st run — choke

GPT-4o gives up after teasing me:

“Given the complexity, let’s solve this equation numerically”.

ChatGPT-4o session @ sabrina.dev

2nd run — correct

Yay!

GPT-4o gets the right answer on the 2nd try, without the image, without any prompt engineering.

ChatGPT-4o session @ sabrina.dev

3rd run — incorrect

Unfortunately, the 3rd try was wrong.

The probabilistic nature of LLMs rears its head…

ChatGPT-4o session @ sabrina.dev

2. Zero-Shot Chain-of-Thought

Second, I test one modality, assisted by prompt engineering:

I give GPT-4o the text prompt, without the image.

Then I add a simple prompt engineering technique:

Take a deep breath and work on this problem step-by-step.

Seems too simple, right? 😅

This prompt engineering technique is called Chain-of-Thought.

It’s proven to improve ChatGPT’s performance on logic and reasoning tasks by requiring it to explain intermediate steps leading to an answer.

Full prompt:

There is a roll of tape. The tape is 100 meters long when unrolled. When rolled up, the outer diameter is 10 cm, and the inner diameter is 5 cm. How thick is the tape?

Take a deep breath and work on this problem step-by-step.1st run - correct

2nd run - correct

3rd run - correct

Quite a surprise, this absurdly simple prompt engineering technique resulted in 3/3 correct answers!

3. Dimensions Inside Image, Missing Data

Third, I test multi modality (image) and a minimal text prompt.

I remove dimension data from the text prompt, so GPT-4o must analyze the image correctly to extract the tape roll’s dimensions (radius and diameter).

However, the length of tape unrolled is neither in the image nor text prompt.

I expect GPT-4o’s output to be something like, “without knowing the length we can't determine it”.

Image uploaded to ChatGPT-4o

There is a roll of tape with dimensions specified in the picture. How thick is the tape?1st run - incorrect

2nd run - incorrect

3rd run - incorrect

Sabrina Ramonov @ sabrina.dev

Interestingly, ChatGPT-4o successfully analyzes the image to determine the outer diameter 10cm and inner diameter 5cm.

But misinterprets the problem statement:

GPT-4o interprets “how thick is the tape” as referring to the cross-section of the tape roll, rather than the thickness of a piece of tape.

Recall the original prompt which has:

dimension data

length of tape unrolled

the concept of rolled vs unrolled tape

There is a roll of tape. The tape is 100 meters long when unrolled. When rolled up, the outer diameter is 10 cm, and the inner diameter is 5 cm. How thick is the tape?

Missing this important context, GPT-4o should’ve said it can’t solve the problem. But it went ahead and tried anyway with a different interpretation, indeed a pretty reasonable interpretation given the data at hand.

4. Prompt and Image

Fourth, I test multi modality (image) and a text prompt that includes the length of tape unrolled.

There is a roll of tape with dimensions specified in the picture. The tape is 100 meters long when unrolled. How thick is the tape?

Image uploaded to ChatGPT-4o

1 — choke

Well, this is amusing…

GPT-4o notices its estimate seems unusually large and tries to course correct!

But then it gives up... dying with a grammatically incorrect last sentence:

I will re-calculation next response

Sabrina Ramonov @ sabrina.dev

2 — incorrect

The 2nd run is better, still wrong, but at least GPT-4o didn’t choke.

Sabrina Ramonov @ sabrina.dev

3 — correct